I may have been quiet on here but not because I haven’t been doing lots of fun nerdy stuff. Unfortunately, there’s a fair amount of it that can’t be blogged about, hence the lack of new material here, but a problem came up the other day that was a royal pain in the ass pretty fun and interesting, and maybe some folks out there might be scratching their heads over it and appreciate there being something in the depths of t’interwebs to explain it.

Bonding is a pretty damn useful thing, especially to us NSM folks. Take a 1×1 tap and run the output cables up to a nice bit of tin running $distro_of_choice, a few minutes of tweaking interface config files, and hey presto! a bonded interface with both directions of traffic for Snort/Suricata/Bro/whatever to listen to, and your kit is safely out of line where the sysadmins can’t blame you when something breaks and takes out the internet (they’ll probably still try though).

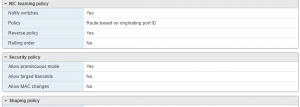

So far, so standard. The other day I needed to do this in a VM – no problem, I thought. VMWare will let you pass traffic through to the guest; you need to put the switch into promiscuous mode because the interface in your guest/sniffer won’t have an IP assigned, which you can do in the vSwitch Security Policy.

With each output of the tap assigned its own vSwitch which was attached to an individual interface on the guest, I created a bond interface to combine the two. In the very best tradition of here’s one I made earlier let someone else make and plagiarised shamelessly, you can read a good guide here. One notable exception – use mode 0 (round robin) and not active/passive – we want to combine the outputs, instead of having the second only work if the first fails.

So, having done that, I brought up the bond0 interface and… weirdness happened. I was only seeing one side of the traffic. tcpdump on the bond0 interface was only showing the responses, not the requests. The slaved interfaces told a similar story, one had traffic (inbound), and the other was silent. Odd. Next check, was the ESXi host seeing the traffic but not passing it through? Checking this requires the use of pktcap-uw rather than VMWare’s implementation of tcpdump, which will not let you look at traffic on individual vSwitches. This showed the traffic was indeed present.

Proper head-scratching time now. The interface settings were all correct, the problem persisted through restarts of the interfaces, the networking service, even the OS. Next step was bringing up each interface manually one at a time; now it got even weirder. eth1 showed responses as expected. eth2 showed requests – awesome! bond0 showed… just the responses. Checked eth2 and it was now silent as the grave. Curses! This didn’t change when bond0 was shut down again; outbound traffic would only reappear when eth2 was brought up without bond0. Enabling bond0 killed it again until it was started without bond0 running. What the hell?

Having pretty much run out of ideas, a bit of experimentation was on the cards, starting with the ESXi config settings. This was clearly a stroke of genius, because upon setting MAC address changes to ‘accept’, it instantly started working. Why would this be?

One of the things that enabling bonding does is that the bond0 interface defaults to starting with the MAC of the first interface to join the bond. In round-robin mode, it then shuttles its MAC address around each interface to receive frames; VMWare’s (sensible) default is to ignore changes like this, and as a result, will stop transmitting traffic to the interface it sees as having violated the restriction until the interface is bounced. Thus, the first slave to join will receive traffic because its MAC stays the same, and the second stops being sent data because the vSwitch has seen its MAC change. Permitting changes on the vSwitch means the MAC can be assigned as necessary.

TLDR: If you want to use a bonded interface in an ESXi guest like this, you must set ‘Allow MAC address changes’ to accept on the vSwitches the slave interfaces connect to.